[return to Climate Primer main page]

As reviewed in the previous section, there is compelling evidence for global heating, as evident in global surface land and ocean temperatures [paper], other meteorological measurements such as barometric pressure [paper], and satellite temperature measurements [paper].

Carbon dioxide (CO2) is a heat trapping gas. More technically, it absorbs the infra-red energy radiating from the earth’s surface and turns this into thermal energy (heat), a process known as “radiative forcing”. Other gases such as methane and nitrous oxide trap heat even more effectively, although the rate of their release into the atmosphere is less than CO2. Indeed, without these gases and water vapour, the earth’s atmosphere would be too cold to support life as we know it. Industrial activity releases carbon dioxide into the atmosphere, principally through burning of fossil fuels for energy production and transport. The global growth rate of CO2 has nearly quadrupled since the early 1960s (Fig. 7a).

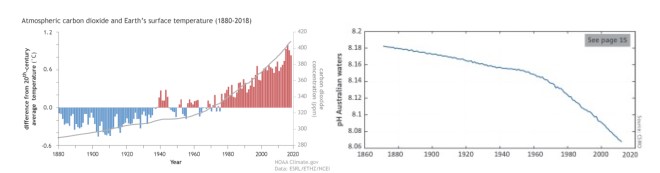

The annual global average carbon dioxide concentration at Earth’s surface for 2017 was the highest in the modern atmospheric measurement record and in ice core records dating back as far as 800 000 years. Because carbon dioxide dissolves into sea water to form carbonic acid, the impact of this increase can be also seen in changes in the ocean’s pH (Fig. 7b).

Figure 7: (left) Increasing global (land and sea) surface temperature (bars) and atmospheric CO2 (gray line) [site]; (right) Acidification (lowering pH) of Australian waters [report].

The “heat-trapping” effect of CO2 can be measured and incorporated into comprehensive climate models (mathematical models of heat transfer and atmospheric kinetics) which can then be fit to temperature recordings and evaluated (see below).

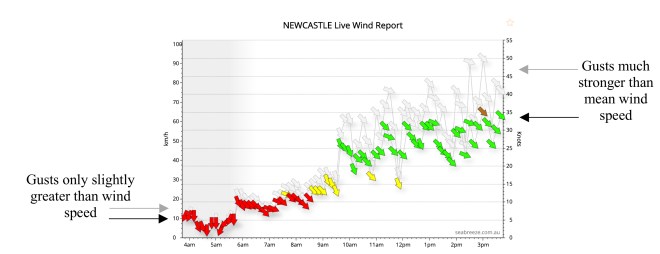

Just to note that weather forecasting involves modelling the movement of gases (atmosphere) and fluids (oceans) in the presence of heating – these have become astonishing in their complexity and can be viewed in the public domain through websites such as www.windy.com. However, the so-called “nonlinearities” in these models amplify fluctuations and gives weather forecasting a temporal window of only about 10 days until “chaos” takes effect. For example, if the wind speed doubles, the gusts above the mean also increase substantially (Fig. 8): This feedforward amplification is relevant to all aspects of weather systems: increases in extreme events can be “super-additive” to increases in the average: The same effect is apparent in the super-additive increase in the number of very hot days (Fig. 5).

Figure 8: Wind speeds on a typical day in Newcastle. As mean wind speed increases through the day from ~10km/h to 35 km/h, the additional gusts increase from 3km/h to over 15km/h [site]

In contrast to weather, climate modelling addresses longer time scales. This is achieved by using a standard technique in physics known as the separation of time scales. In this manner, only slow changes in meteorological observables are modelled, consistent with the physics of the ocean, land and atmosphere (the fast, chaotic fluctuations no longer degrade forecast accuracy). As in all branches of science, climate models can be fit to data and evaluated; different models can be compared; and good models are incrementally improved through the process of data fitting, evaluation and innovation [site]. To ensure that models don’t become more complicated than they need to be, models can also be penalized by their complexity (roughly, how many parameters they contain).

Initial predictions of global heating due to increases in atmospheric CO2 were published nearly 50 years ago [paper]. Reasonably complex climate models, incorporating radiative forcing, were developed in the 1980s and predict global heating with remarkable accuracy, considering the limited computational resources available at the time [paper, paper].

Climate modelling is now a mature scientific field, implemented globally by mass supercomputer simulations [paper]. As more complex negative and positive feedback loops have been incorporated (such as the mixed effects of clouds), these models have converged toward the observed heating with increasing accuracy [site].

Those models that contain the terms for the heating effect of carbon dioxide in the atmosphere do a far better job at explaining and predicting measurements than those models that do not, even when a litany of other effects are modelled. For example, scientists from the CSIRO have shown that there is less than 1 in 100,000 chance that global average temperature over the past 60 years would have been as high without human-caused greenhouse gas emissions [paper]. The empirical data used in this paper is available in the public domain for anyone to simulate and compare a competing model of climate change, such as one without radiative forcing or with other putative effects such as fluctuating solar heating.

The current models of atmospheric physics that support “man-made” climate change were recently summarised in a paper co-authored by over 100 scientists and subject to multiple rounds of independent peer review [paper]. The next update will be published in 2021 following a five-month open call for contributions.

NASA and 200 of the world’s other leading scientific organizations unequivocally support the science of man-made climate change [site]. This includes the American Physical Society (who publish the world’s leading physics journals) and the American Association for the Advancement of Science (who publish Science).

Global heating depends on the Equilibrium Climate Sensitivity (ECS), which relates atmospheric CO2 concentration to atmospheric temperature. For decades, ECS has been estimated to be between 2.0° and 4.6°C, with much of that uncertainty owing to the difficulty of establishing the effects of clouds on the Earth’s energy budget. A recent study in the prestigious journal Science concluded that projected ECS could be between 5.0° and 5.3°C [paper].

Next section: Is it “normal variation”?

Papers

A probabilistic analysis of human influence on recent record global mean temperature changes

Man-made carbon-dioxide and the “Greenhouse effect”

Climate impact of increasing atmospheric carbon dioxide

Global climate change as forecast by Goddard Institute for Space Studies three dimensional model

State of the Climate in 2018 (Bulletin of the American Meteorological Society)

Independent confirmation of global land warming without the use of station temperatures

Tropospheric Warming Over The Past Two Decades

Climate Change 2013: The Physical Science Basis

Observational constraints on mixed-phase clouds imply higher climate sensitivity

Reports

State of the Climate (Bureau of Meteorology and CSIRO)

Scientific websites

Climate at Glance: Global Time Series (NOAA)

How well have climate models predicted global warming? (Carbon Brief)

One thought on “3. Is climate change due to human activity?”